Apple has quietly acquired a Mountain View-based startup, WaveOne, that was developing AI algorithms for compressing video.

Apple wouldn’t confirm the sale when asked for comment. But WaveOne’s website was shut down around January, and several former employees, including one of WaveOne’s co-founders, now work within Apple’s various machine learning groups.

WaveOne’s former head of sales and business development, Bob Stankosh, announced the sale in a LinkedIn post published a month ago.

“After almost two years at WaveOne, last week we finalized the sale of the company to Apple,” Stankosh wrote. “We started our journey at WaveOne, realizing that machine learning and deep learning video technology could potentially change the world. Apple saw this potential and took the opportunity to add it to their technology portfolio.”

WaveOne was founded in 2016 by Lubomir Bourdev and Oren Rippel, who set out to take the decades-old paradigm of video codecs and make them AI-powered. Prior to joining the venture, Bourdev was a founding member of Meta’s AI research division, and both he and Rippel worked on Meta’s computer vision team responsible for content moderation, visual search and feed ranking on Facebook.

Where it concerns standard algorithms for compressing and decompressing video, the compression happens on the content provider’s side (e.g. YouTube servers), while end-users’ machines handle the decompressing. It’s an effective approach, but new codecs require new hardware specially built to accelerate compression or decompression, making improvements slow to propogate.

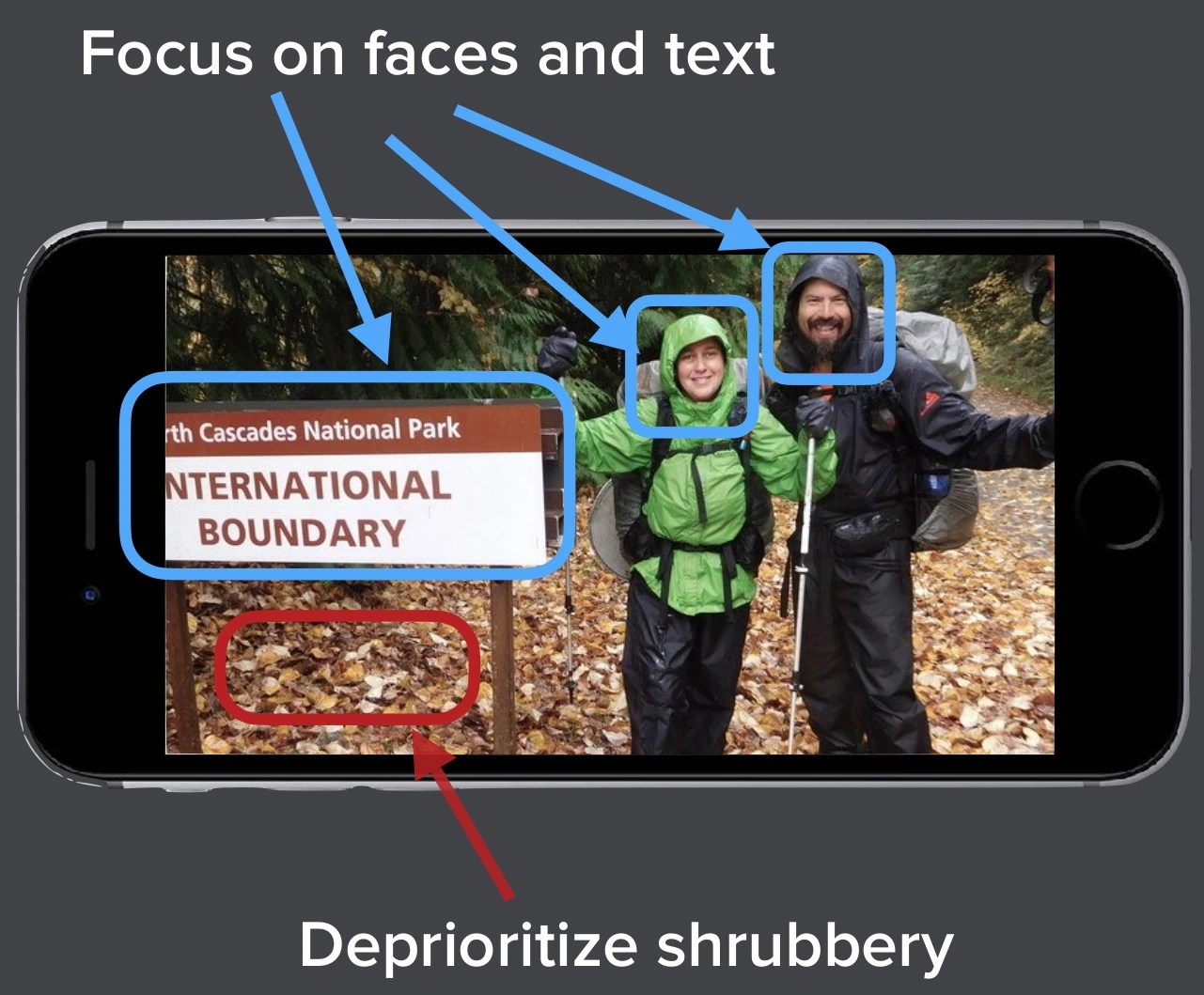

WaveOne’s main innovation was a “content-aware” video compression and decompression algorithm that could run on the AI accelerators built into many phones and an increasing number of PCs. Leveraging AI-powered scene and object detection, the startup’s technology could essentially “understand” a video frame, allowing it to, for example, prioritize faces at the expense of other elements within a scene to save bandwidth.

WaveOne also claimed that its video compression tech was robust to sudden disruptions in connectivity. That is to say, it could make a “best guess” based on whatever bits it had available, so when bandwidth was suddenly restricted, the video wouldn’t freeze; it’d just show less detail for the duration.

WaveOne claimed its approach, which was hardware-agnostic, could reduce the size of video files by as much as half, with better gains in more complex scenes.

Investors saw the potential, apparently. Prior to the Apple acquisition, WaveOne attracted $9 million from backers including Khosla Ventures, Vela Partners, Incubate Fund, Omega Venture Partners and Blue Ivy.

So what might Apple want with an AI-powered video codec? Well, the obvious answer is more efficient streaming. Even minor improvements in video compression could save on bandwidth costs, or enable services like Apple TV+ to deliver higher resolutions and framerates depending on the type of content being streamed.

YouTube’s already doing this. Last year, Alphabet’s DeepMind adapted a machine learning algorithm originally developed to play board games to the problem of compressing YouTube videos, leading to a 4% reduction in the amount of data the video-sharing service needs to stream to users.

Perhaps we’ll see similar innovations from the Apple-owned WaveOne team soon.

Comment