Mage, developing an artificial intelligence tool for product developers to build and integrate AI into apps, brought in $6.3 million in seed funding led by Gradient Ventures.

Founder Tommy Dang started the company at the end of 2020 after building internal low-code tools at Airbnb. While collaborating with product developers, Dang saw that while product developers wanted to use AI, they didn’t have the right tools in which to do it without relying on data scientists.

“We worked with hundreds of developers who had great machine learning tools and internal systems to launch models, but there were not many who knew how to use the tools,” Dang told TechCrunch. “They didn’t work with machine learning extensively, so we decided to build tools for technical non-experts. We are like Stripe for AI, making it easier for developers to put AI into apps.”

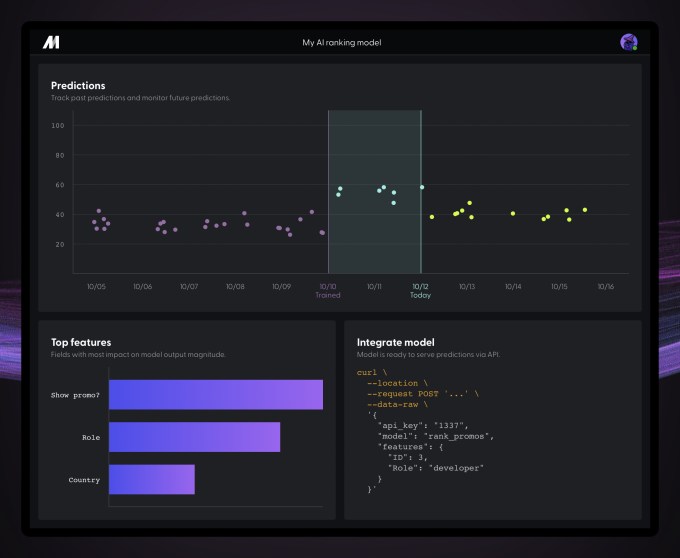

The market for AI tools is expected to reach $126 billion by 2025, but most of those continue to be geared toward those with experience in AI. Mage’s technology is a low-code, cloud-based tool and user interface with a shared workspace similar to Figma. Users can add data by uploading a file, streaming data or connecting to a data warehouse. From there, they can build models and select from other use cases, like churn prevention, ranking and matching users. Following the model creation, users can review the model, improve on it and then download to a file, connect back to the data warehouse or deploy it into an API or app.

Joining Gradient in the round were Neo, Designer Fund and a group of angel investors, including Unity CEO John Riccitiello, Behance founder Scott Belsky, Lenny’s Newsletter author Lenny Rachitsky and James Beshara.

Darian Shirazi, general partner at Gradient Ventures, said via email that he found Mage while looking for an investment in the machine learning infrastructure space that didn’t require data engineering experience. He saw most of the companies funded recently were heavy infrastructure, and facilitated large jobs for data scientists and machine learning engineers.

Shirazi saw a market asking for technologies and systems that enabled non-data scientists to leverage AI and machine learning. Shirazi found that in Mage. He had met Dang while at UC Berkeley and later reconnected while Dang was at Airbnb. He believes that if “Mage succeeds in providing the easiest tools for leveraging AI and machine learning, they will transform how everyone does business.”

“There is a strong appetite from companies and individuals to leverage technologies and systems that are currently only accessible to domain experts such as data scientists, ML engineers and AI researchers,” he added. “The reality is that the number of applications for AI/ML are endless. There needs to be simple tools to allow anyone to leverage machine learning, without requiring a deep understanding of math, computer science or data science.”

He considers Mage’s “superpower” to be “the nexus of data quality tools and interoperability of ML models and features.” Shirazi expects the company to eventually have a marketplace of different models and tools for manipulating and combining data sets, like for marketing, sales, product and finance.

Mage is still in beta, but working with small businesses, and Dang said the company has plans for its self-service feature to go live in early 2022. Behind-the-scenes, the company is hiring for product design and engineering and intends to also use the new capital to build out additional AI tools and expand internationally.

Dang said the company wasn’t focused on revenue at the moment, but has amassed a group of paying customers from the beginning. These early clients are helping Mage by trying out the features, he added.

“Our next steps are to launch to general availability where you can onboard yourself,” Dang said. “The need for machine learning is a global need, and not many others emphasize making tools accessible. We have a community of developers that want to expand their skill set and grow their toolkits.”

Comment