While generative AI is buzzy right now, what OpenAI, Microsoft and Google are doing may be only part of the story. There is also the process of using biology: the idea of using stem cells to create biocomputers that could potentially be smarter and more energy efficient than what we are used to today.

Australian startup Cortical Labs popped up on the radar after Amazon CTO Werner Vogels flew down to Australia to visit their lab recently, and he even wrote about it, calling it “intriguing.”

Cortical combines synthetic biology and human neurons to develop what it claims is a class of AI, known as “Organoid Intelligence” (OI).

It’s now raised a $10 million funding round led by Horizons Ventures, with participation from LifeX (Life Extension) Ventures (the launch of which which we covered last year), Blackbird Ventures, Radar Ventures and In-Q-Tel (the venture arm of the CIA).

The company says it is already in the process of fulfilling orders for its technology.

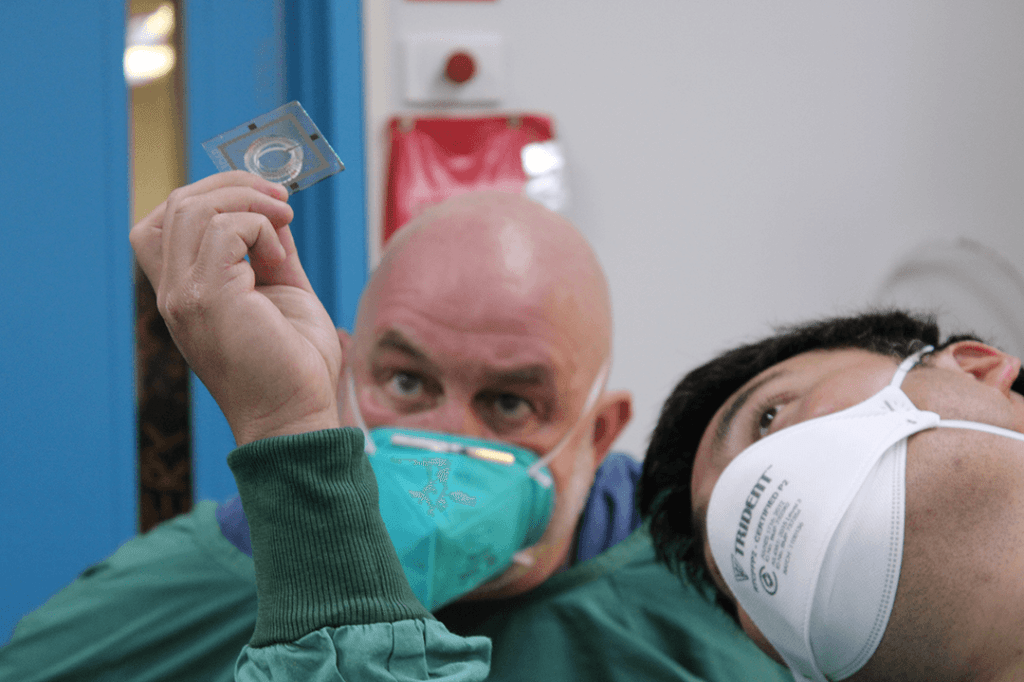

How it works is that it uses clusters of lab-cultivated neurons from human stem cells to form what it calls a “DishBrain,” which is then hooked-up to hard silicon to create what it describes as a Biological Intelligence Operating System (biOS).

Some observers say this is the future of AI because human neurons could be better than any digital AI model for generalized intelligence, given that they are self-programming and require far less energy consumption.

Hon Weng Chong, CEO and founder of Cortical Labs, said in a statement: “The possibilities that a hybridised AI meets synthetic biology model can unlock are limitless, accelerating the possibilities of digital AI in a more powerful and more sustainable way.”

This new $100M fund plans to focus on startups accelerating the science around longevity

Jonathan Tam of Horizons Ventures said: “Ultimately, by being able to use these systems to better understand, and eventually harness, how neurons display intelligence, it will open up a plethora of applications, including a revolution in personalized medicine and disease detection.”

Cortical Labs’ technology first appeared in the scientific journal Neuron in October 2022, demonstrating that neurons in a petri dish can be encouraged to play the computer game Pong.

This sounds trivial, but as Weng Chong told me over email, this could allow the development and testing of new drugs and therapies, plus “if you took your blood and made them into neurons then this drug discovery becomes even more personalizable – the results would be tailored specifically for you only.”

He also says that competition in the space is low: “It doesn’t directly compete with anything because this is the first of its kind pioneering the field of Organoid Intelligence. Organoid Intelligence has the potential to learn faster and use far less energy than any other AI system in existence. GPT is so smart because it ingested all of the Internet, however you or I don’t have to for us to have pretty good conversational skills.”

“It took at least 10 years since Geoff Hinton and Alex Krizhevsky put a GPU to do Deep Learning that we’ve gotten to where we are today. We’re still in the early days of this technology,” he added.

In the immediate term, he says an immediate application is to effectively drip a new drug onto the cells to test it — if the cells can no longer play Pong, you know the drug doesn’t work: “Not only is the efficacy able to be better determined but also the cognitive side-effects (brain fog) can be elucidated as we now have a potential assay for cognition in the form of neurons playing the Pong game.”

He says the technology could also be used to study dementia and even “brute force” test the compounds that we have discovered using Quantum Computing and Generative AI.”

And potentially further into the future “if the number and complexity of these neurons are scaled, the end result would be familiar to us as fully embodied organisms such as a cat, dog, or human.”

Hold on to your hats, people.

Comment