FundamentalVR, an immersive simulation platform for medical and healthcare professions, has raised $20 million in a round of funding to “accelerate skill-transfer and surgical proficiency” through virtual reality (VR) and mixed reality (MR) applications.

Despite its decades-long promise, VR hasn’t traveled too far beyond gaming circles or niche industrial use-cases, though this is something that Meta and its Big Tech ilk are pushing aggressively to change. However, among the industries that have long embraced VR are medicine and healthcare. By way of example, back in 2009, a neurosurgeon in Canada used a VR-based simulator to carry out a dry-run of a real brain tumor surgery in what was thought to be a world’s first at the time. More recently, VR has been used in all manner of healthcare scenarios, from treating social anxiety and other mental health conditions to surgical training.

Big impact

Medical simulation serves as a powerful example of how VR and related MR systems are having a meaningful societal impact away from the mainstream gaze, with such technologies now regularly used to train new doctors or help surgeons maintain existing skills and learn new procedures. Data from Research and Markets suggests that the healthcare and medical simulation market is a $2 billion industry today, a figure that’s predicted to double within five years — and this is something that FundamentalVR is looking to capitalize on.

Founded out of London in 2012, FundamentalVR is a software-as-a-service (SaaS) platform that combines VR with haptics to enable medical processionals to access training such as orthopedic joint / spine procedures; anterior total hip replacement (A-THR); posterior total hip replacement (P-THR); total knee replacement (TKA); facetectomies; and more.

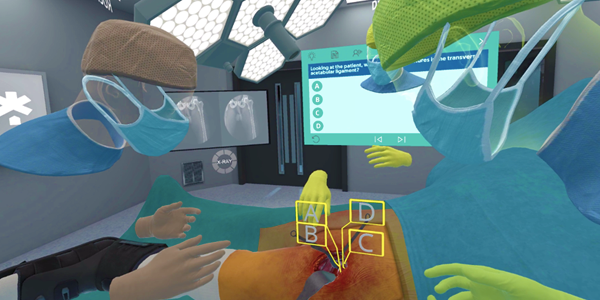

At the heart of the company’s so-called Fundamental Surgery platform is what it calls HapticVR, which makes virtual procedures more life-like through physical sensory feedback — HapticVR is compatible with myriad handheld devices, including haptic gloves and purpose-built controllers.

It’s worth noting that the company can provide the hardware when specific partners and institutions require that as part of a commercial agreement, but for the most part FundamentalVR is the engine and interface for companies’ own existing hardware — this includes VR headsets such as Oculus Quest and HTC Vive, as well as MR platforms such as Holo Lens and Magic Leap.

“It is designed to be hardware-agnostic, and able to work with any laptop, VR headset and haptic equipment that is readily available on the open market on Amazon or specialist electrical stores,” FundamentalVR CEO Richard Vincent explained to TechCrunch. “This makes the solutions highly scalable and affordable.”

On top of that, FundamentalVR also allows an unlimited number of users to interact in virtual classrooms and operating theaters around the world.

Feedback

There are numerous players in the burgeoning medical simulation space, such as Medical Realities, ImmersiveTouch and OssoVR, the latter having recently closed a $66 million round of funding. However, Vincent is adamant that its more holistic life-like haptics is what really sets it apart — it blends cutaneous (tactile vibration) with kinesthetics, which includes force, feedback and positional haptics.

“Surgery is a multisensory skill — touch is pivotal in enabling the surgeon to learn and carry out procedures and a requirement in truly acquiring surgical skills,” Vincent said. “However, not all haptics are created equal, and there is a huge difference between cutaneous and kinesthetics haptics technology and how it can be used. While VR simulations with cutaneous feedback are competent in medical education and training to help acquire knowledge, the addition of ‘full-force’ haptics now allows for the acquisition of skills.”

FundamentalVR’s customers include medical institutions, device manufacturers, and even pharmaceutical firms bringing new therapies to market — this includes Swiss-American multinational Novartis, which used FundamentalVR to create a haptic simulation for a sub-retinal injection. Other clients include Mayo Clinic, NYU Langone, and UCLA in the U.S.; UCLH and Imperial College in the U.K.; and Sana Kliniken, a teaching hospital network in Germany.

FundamentalVR’s latest round of funding was led by EQT Life Sciences, with participation from Downing Ventures. The company has now raised just over $30 million in total.

Comment