Meetings can go one of two ways… either be productive, or be ineffective. Read AI wants all meeting goers to be engaged and feel productive, and it has developed a real-time shared dashboard to alert meeting participants when things are going well or not so well.

The Seattle-based company emerged from stealth mode Wednesday with its first financing, a $10 million seed round, led by Madrona Venture Group, and joined by PSL Ventures and a group of angel investors, including former Placed board member David Joerg, Verishop CEO Imran Khan, AI2 CEO Oren Etzioni, Qumulo CEO Bill Richter, Wunderman CEO Shane Atchison, Divvy founder Brian Ma, Snapchat product VP Peter Sellis and Snapchat engineering VP Nima Khajehnouri.

Read was co-founded in May by a team that has known each other for the past 20 years: CEO David Shim, former CEO of Foursquare, vice president of engineering Rob Williams and vice president of data science Elliott Waldron. The trio previously worked together at location analytics startup Placed, where Shim was also CEO. The company was acquired by Snapchat in 2017, and spun out into Foursquare in 2019.

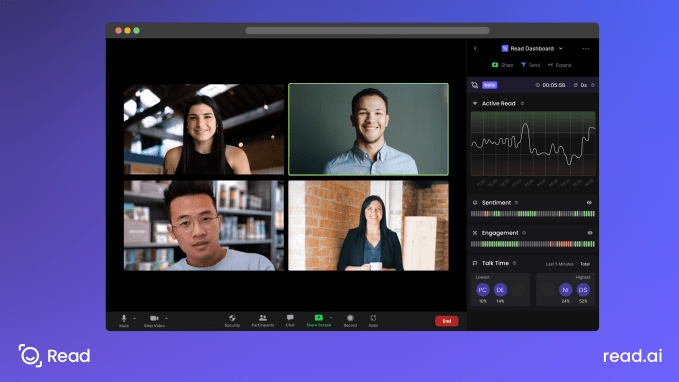

The company’s first product, Read Dashboard, is a dashboard for virtual meetings that leverages artificial intelligence, computer vision and natural language processing to measure engagement, performance and sentiment among participants.

“Read Dashboard is like ‘Waze for meetings,’ ” Shim told TechCrunch. “We want to make collaboration easier, so it gives you analytics on how the meeting is going.”

Instead of checking each two-inch video box to read a participant’s emotions, Read provides real-time graphs and meters that show if one or more people are dominating the conversation, if people seem bored, not paying attention or if people seem happy or sad about what is being said. For example, on a sales call, Read can show if what is being said is trending negatively quickly so you can either stop talking or pivot to another topic, Shim said.

Currently, the dashboard is available as a free service on Zoom through Google Calendar integration. It notifies attendees when it is activated and can also be removed. As different use cases are supported, there will be additional features added that can be accessed for a fee, he added.

As part of the investment, Matt McIlwain, managing director at Madrona Venture Group, is joining Read’s board of directors.

McIlwain also worked with Shim when they were both at Farecast, which was bought by Microsoft in 2008. They stayed in contact, and McIlwain was also a seed investor in Placed.

He regards Shim as someone who “has a good eye for having transparency and for creating products that are valuable to all constituents.” With Placed and Foursquare, McIlwain said he could see that Shim was “very hungry to make an impact and make a difference for the world.”

Now with Real, he says the co-founder team has complementary skills and are building something that will live on now that companies are operating in a digital-first world where it will be important to know if someone is not engaged enough, talking too much or not enough, he added.

Virtual meeting platform Vowel raises $13.5M, aims to cure meeting fatigue

Meanwhile, Read decided to go after seed funding for a couple of reasons: one, the global video conferencing market is big — valued at $4.2 billion in 2020 — and two, the company is seeing massive adoption, Shim said. It is also seeing additional use cases like weekly stand ups where it was difficult to get a good read on people because there are so many on the video.

“We could have gone slower and built out over a few years, but we could see the need right now,” he added. “Half of all meetings are considered unproductive. We used to spend 30 or 60 minutes in meetings, and now it is hours a day in virtual conferences.”

In less than five months, Read has grown to 15 employees, something Shim said was a different experience while at Placed, where it took 15 months to reach the same milestone.

The new funding will go toward adding even more staff. The company will also add platforms in the future, like WebEx, Teams and Google Meet. It will also eventually provide integrations with other areas of the digital world, including Facebook and Apply Glass, Shim said.

“There is a huge opportunity for someone to get hints,” he added. “The more we can facilitate the ability to help people with social cues, the better the interactions will be.”

Comment